Noise as a Tool to Make Neural Networks More Reliable

Researchers at Saratov National Research State University named after N. G. Chernyshevsky have found that deliberately introducing random noise during the training of neural networks can make them more resilient to interference in real-world operation.

A research team at Saratovskiy Natsionalnyy Issledovatelskiy Gosudarstvennyy Universitet imeni N. G. Chernyshevskogo (Saratov National Research State University) proposed an unconventional idea: instead of trying to eliminate internal noise in hardware systems, treat it as a mechanism for improving reliability. To test that hypothesis, the scientists rethought the way artificial intelligence models are trained.

Today, most neural networks run in digital form on conventional computers and graphics processors. These systems require vast computational resources and consume significant energy, which in turn limits their scalability. That constraint has accelerated interest in hardware neural networks – physical devices in which neurons and synaptic connections are implemented directly in electronic or other physical elements.

Searching for Ways to Work With Noise

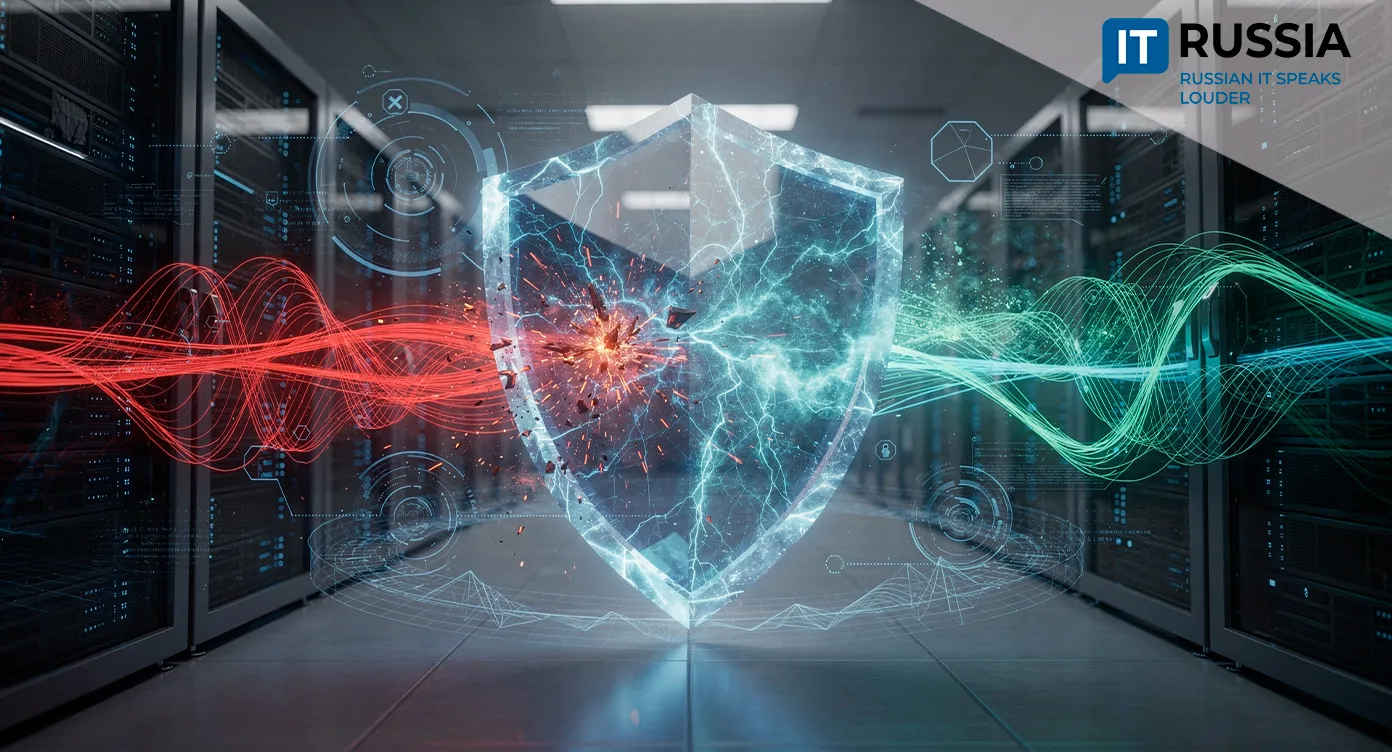

Any physical device is subject to noise, including thermal fluctuations, parameter instability and random signal disturbances. These effects introduce errors that engineers typically try to suppress. The researchers initially assumed that internal noise would degrade the performance of a neural network. Their results showed the opposite.

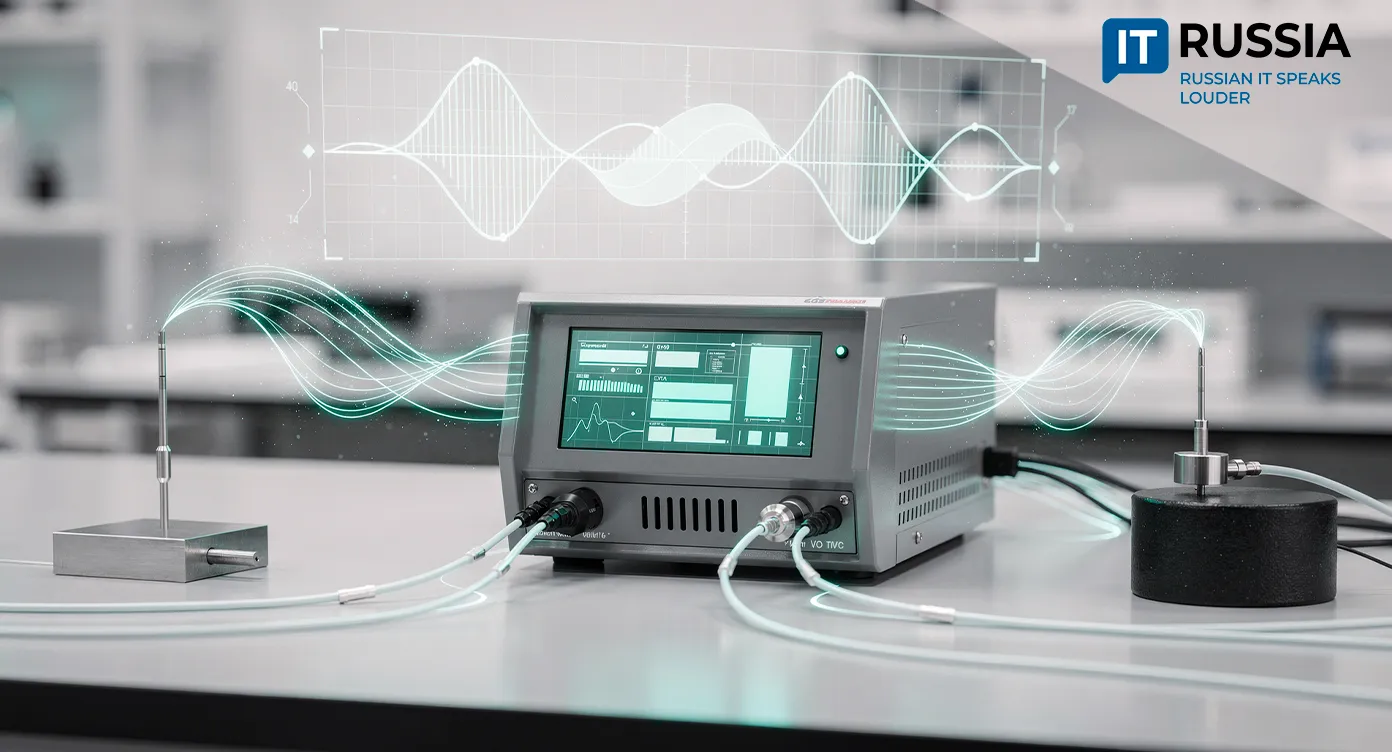

The team describes the outcome as unexpected. They anticipated that accuracy would fall and that they would need to develop strategies for mitigating noise during training. In their experiments, they modeled the impact of white Gaussian noise injected either into the neurons themselves or into the connections between them. The networks were trained to recognize images and to predict complex quasiperiodic and chaotic signals. The surprising finding was that noise during the training phase improved the system’s resistance to interference later on.

During experimental training, the authors observed that deliberately exposing the network to noise during learning enabled it to perform better under real and even challenging conditions. For certain types of noise exposure, the network could be made almost entirely robust to interference.

Practical Significance

The importance of the Saratov team’s work lies in its implications for next-generation, energy-efficient hardware neural network devices. Such systems are expected to be used for image processing, signal analysis and autonomous computation in resource-constrained environments. Improving their resistance to physical interference is critical for real-world deployment, the press service of Russia’s Ministry of Science and Higher Education noted.

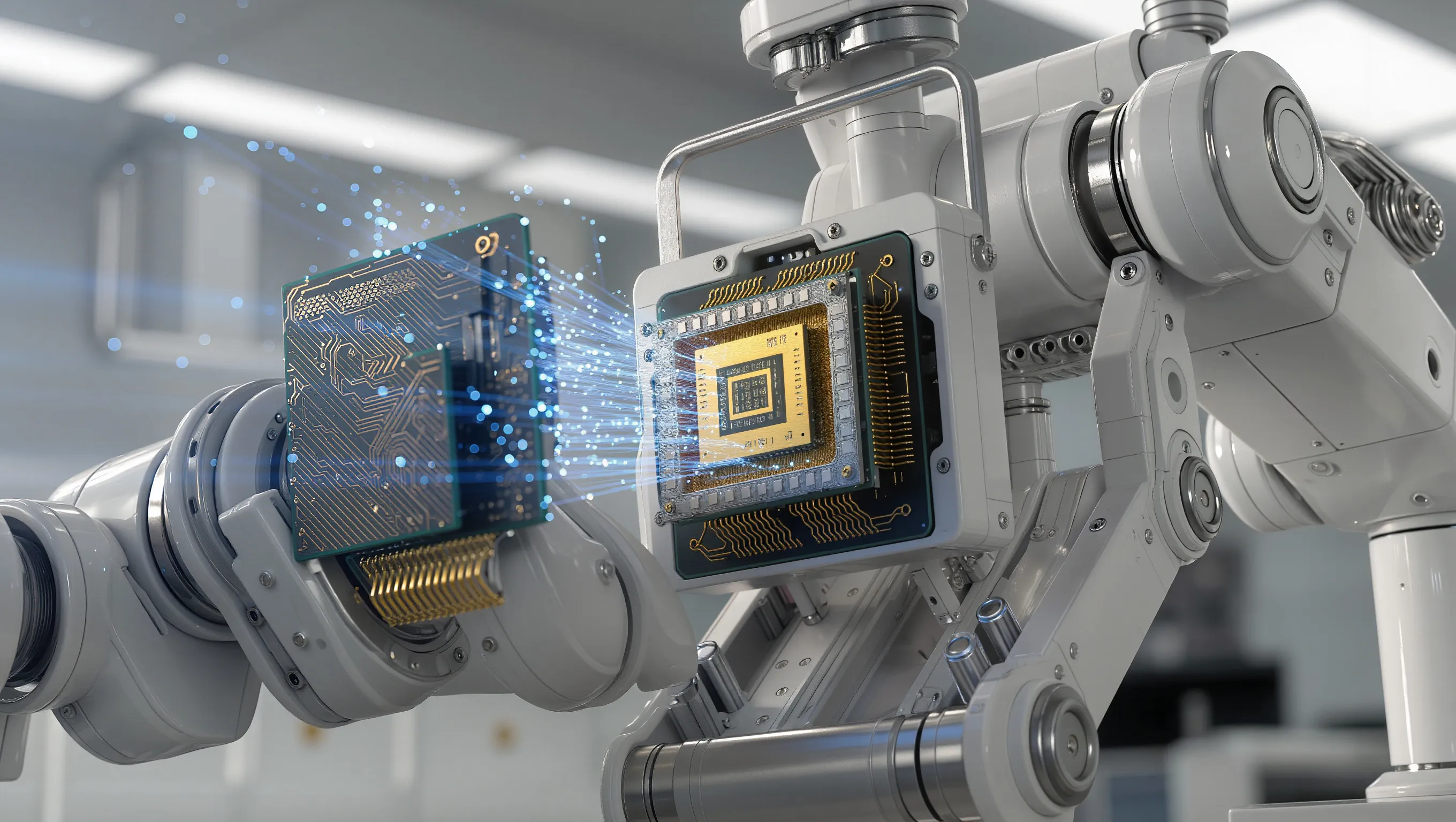

The researchers plan to extend the experiment. In the near future, they intend to apply their findings to more complex spiking neural networks.This Russian research reframes one of the fundamental challenges of hardware artificial intelligence.

“If it is impossible to eliminate noise entirely, it can be transformed from a source of error into a mechanism for enhancing reliability. That shift in perspective is the main result of our study,” the university’s press service emphasized.

Similar studies have been conducted abroad since 2021. Noise injection methods have long been used as a form of regularization – such as dropout and stochastic noise – in conventional neural network training. However, the role of physical noise in hardware systems has drawn focused attention only in the past two to three years. The Russian researchers were able to confirm a significant effect and provide practical justification for applying this approach to hardware AI systems: training networks with noise makes them more resilient to interference during real operation.

Where the Technology Could Be Used

According to specialists, the new technique could strengthen AI reliability in autonomous systems where noise is unavoidable, including sensors and robotics. It may also enhance the robustness of hardware neural networks used in neuromorphic computing, which are difficult to protect using traditional digital noise-suppression methods.

Forecasts Inspire Optimism

In the near term, the Saratov method could become standard practice in the design of hardware neural network accelerators and energy-efficient AI chips in Russia and internationally. The technology has the potential to advance robotics, autonomous sensing systems and edge AI, where resilience to noise is a defining requirement.

The discovery also opens the door to deeper collaboration with international research teams working on robust AI architectures and could help position Russian advances in AI training methodologies on the global stage.