Seeing Through the Glare: Russian Researchers Build a New Navigation System for Industrial Robots

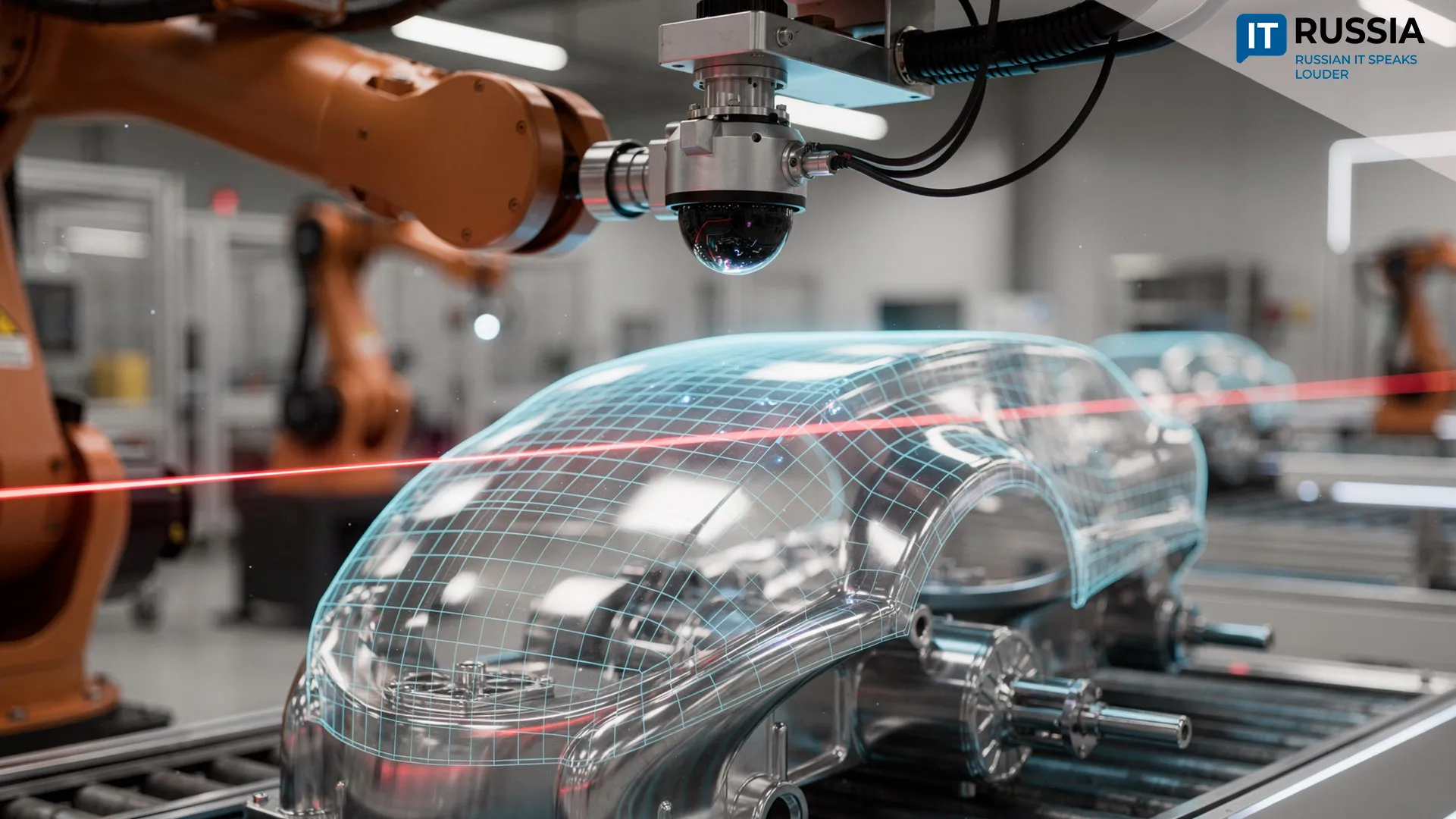

Researchers at Yuzhno-Uralskiy Gosudarstvennyy Universitet (South Ural State University, SUSU) have developed an innovative robotic vision system that enables robots to determine the position of objects reliably even under difficult optical conditions – including glare, reflections, partial occlusion of a laser line and other visual interference. The project was carried out with support from Rossiyskiy Nauchnyy Fond (Russian Science Foundation) within the national initiative Nauka i Universitety (Science and Universities).

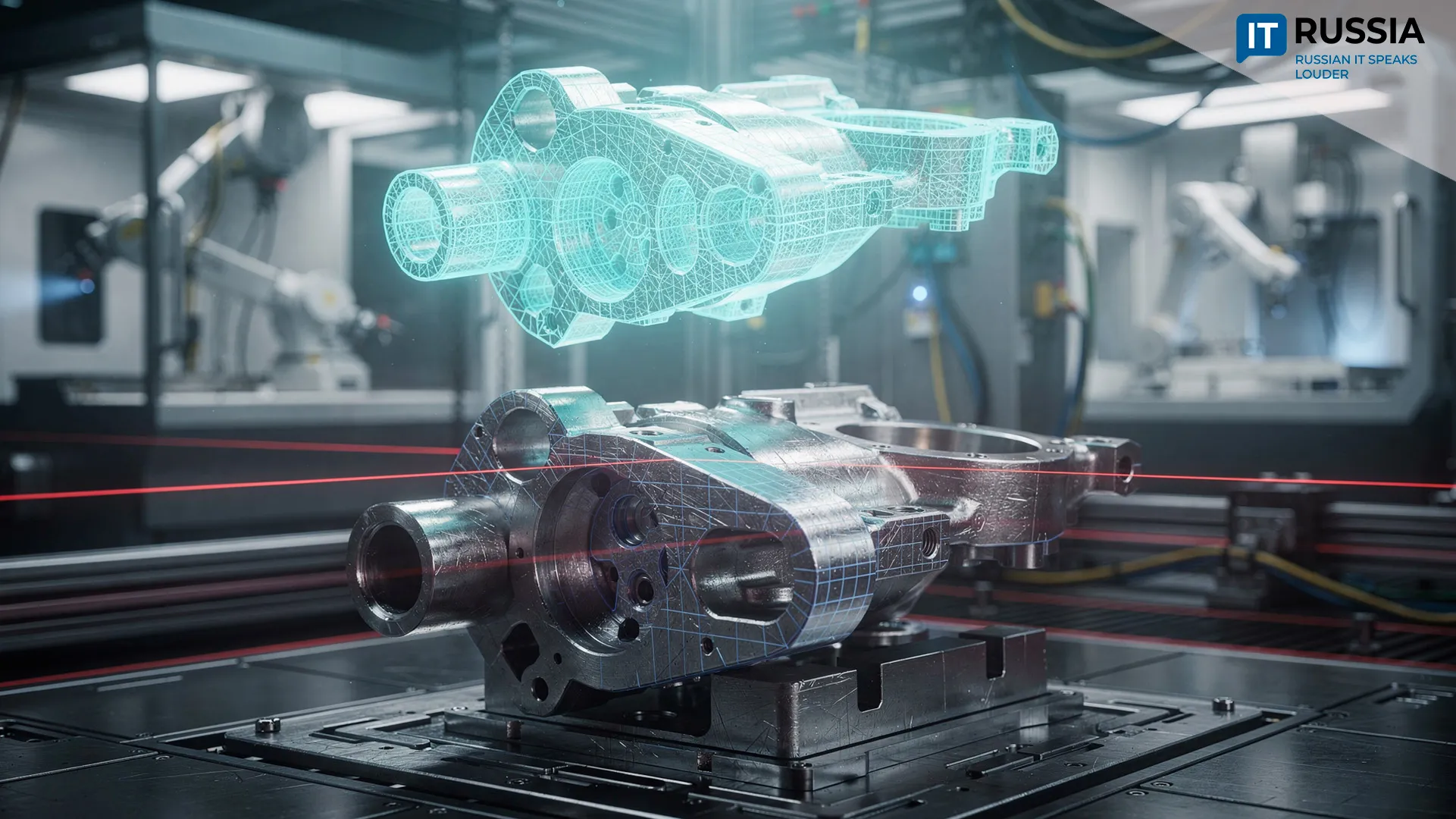

In recent years, advances in sensing and computing technologies have triggered rapid growth in industrial robotics. Cameras now serve as the primary way robots perceive their surroundings, turning machine vision into a core component of robotic control systems.

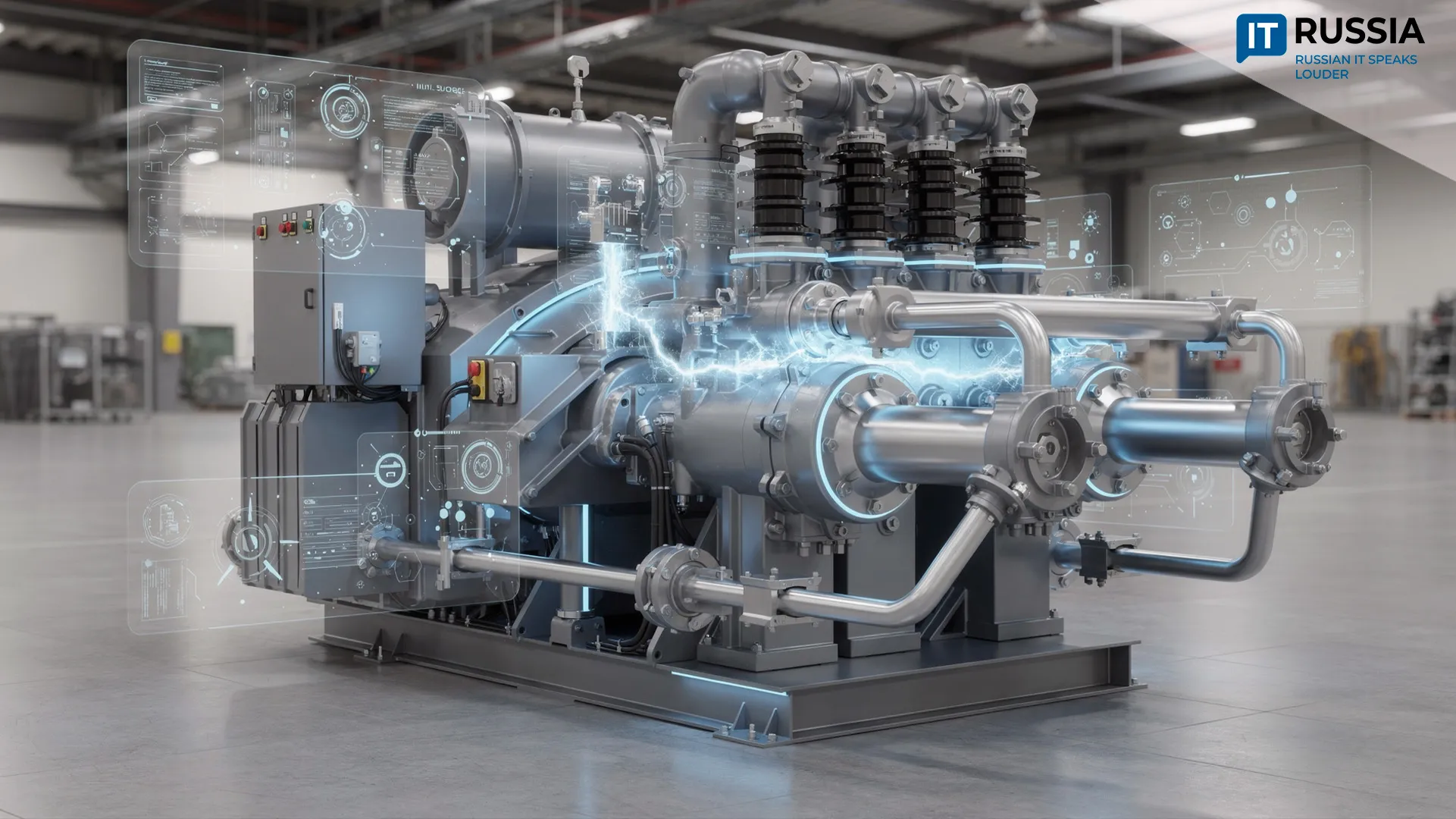

Because monocular cameras cannot reconstruct the depth of objects on their own, many conventional approaches rely on binocular camera systems to restore three-dimensional geometry. Such solutions, however, remain relatively expensive. As passive vision systems, they also give robots a limited field of view – typically no more than about 120 degrees – and struggle in low-light conditions or complete darkness, which restricts their ability to build a complete model of the environment.

Seeing the Target Through Glare and Noise

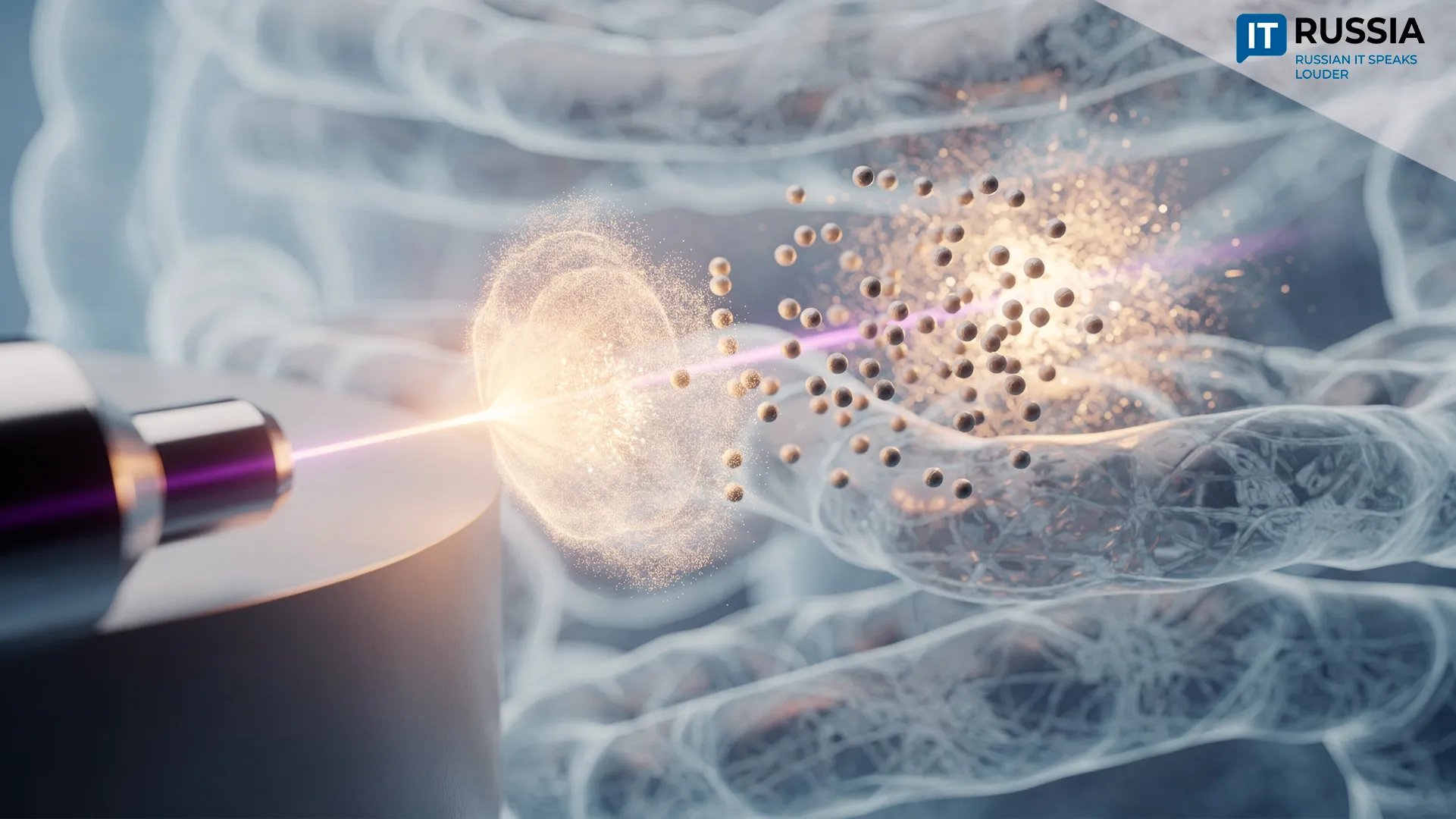

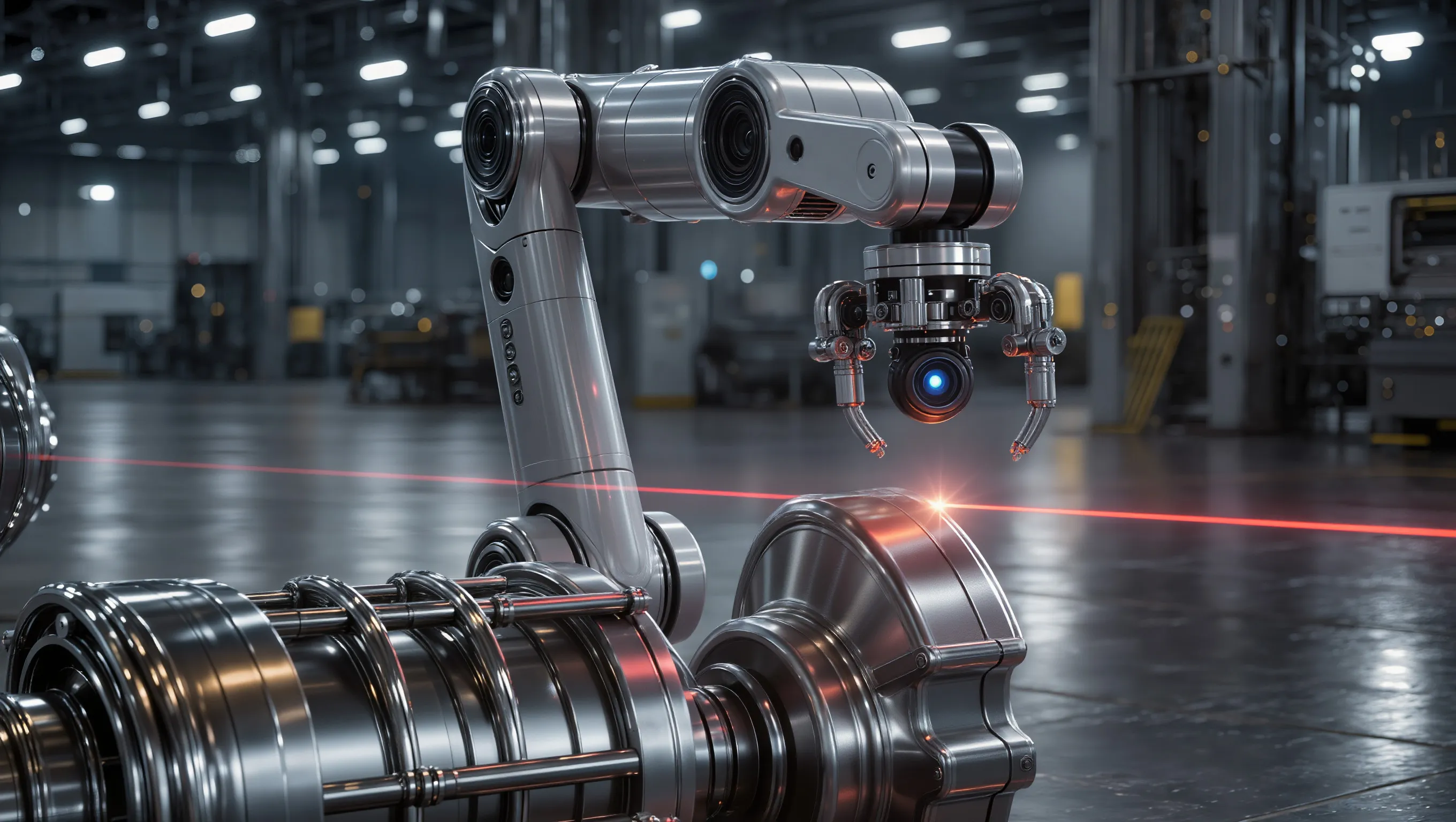

Researchers at South Ural State University have developed a navigation system that allows robots to detect targets even through glare and optical interference. The team created a new localization method that relies on only a single panoramic camera combined with a laser line. The authors of the project are Maksim Grigoriev, Doctor of Engineering and professor, and Ivan Kholodilin, associate professor in the Department of Electric Drives, Mechatronics and Electromechanics.

Modern robotic systems typically require either two cameras for stereo vision or expensive laser rangefinders such as lidars to estimate distance. The new approach proposed by the Ural research team is fundamentally different. It uses just one panoramic camera with a 180-degree field of view, a linear laser emitting at 650 nanometers and an intelligent algorithm that detects the laser line on an object in real time and converts its coordinates into the three-dimensional position of the target.

Reliable Operation in Difficult Visual Conditions

The main achievement of the new system lies in its ability to work reliably in situations where conventional approaches often fail. These include dark or glossy surfaces, red objects where the laser line may blend into the background, mirror-like reflections and even partial occlusion of the laser stripe. Experimental results show that compared with traditional extraction methods, the proposed approach reduces depth reconstruction error by 69.18 percent under interference conditions.

To illustrate the challenge, imagine a robot trying to identify a single target marked by a laser within a cluster of red objects while bright light produces strong reflections across the scene. This is precisely the type of task the SUSU researchers addressed. Unlike conventional solutions, the system performs the task without relying on stereo cameras or costly lidar sensors.

Another advantage of the technology is its minimal hardware configuration. Whereas conventional robotic perception systems depend on multiple cameras or expensive lidar devices, the new design reduces the number of components, making the system more compact and significantly lowering overall cost.

The technology represents an important step forward for Russia’s industrial robotics sector. By increasing autonomy, reliability and measurement accuracy under complex visual conditions, the system could help manufacturers deploy robots in environments where machine vision previously struggled. For domestic industry, the development also supports efforts to reduce dependence on imported lidar systems.

Future Development

One current limitation of the technology lies in the object recognition stage performed by a neural network, which takes 2.93 seconds and accounts for about 86.2 percent of the total processing time. The researchers plan to optimize this stage by using more powerful graphics accelerators and more compact neural network architectures, bringing the system closer to real-time operation.

The work of the research team led by Professor Maksim Grigoriev aligns with a broader global trend in robotics: replacing expensive sensor hardware with smarter vision algorithms and active illumination techniques. This shift could pave the way for more affordable and compact robotic systems for industrial applications.