Pixels With Clinical Meaning: Russian Researchers Develop an AI Model That Thinks Like a Radiologist

Russian engineers have developed an AI model capable of modeling how a radiologist’s gaze moves across an X-ray image. The technology could improve diagnostic accuracy for conditions such as pneumonia and heart failure.

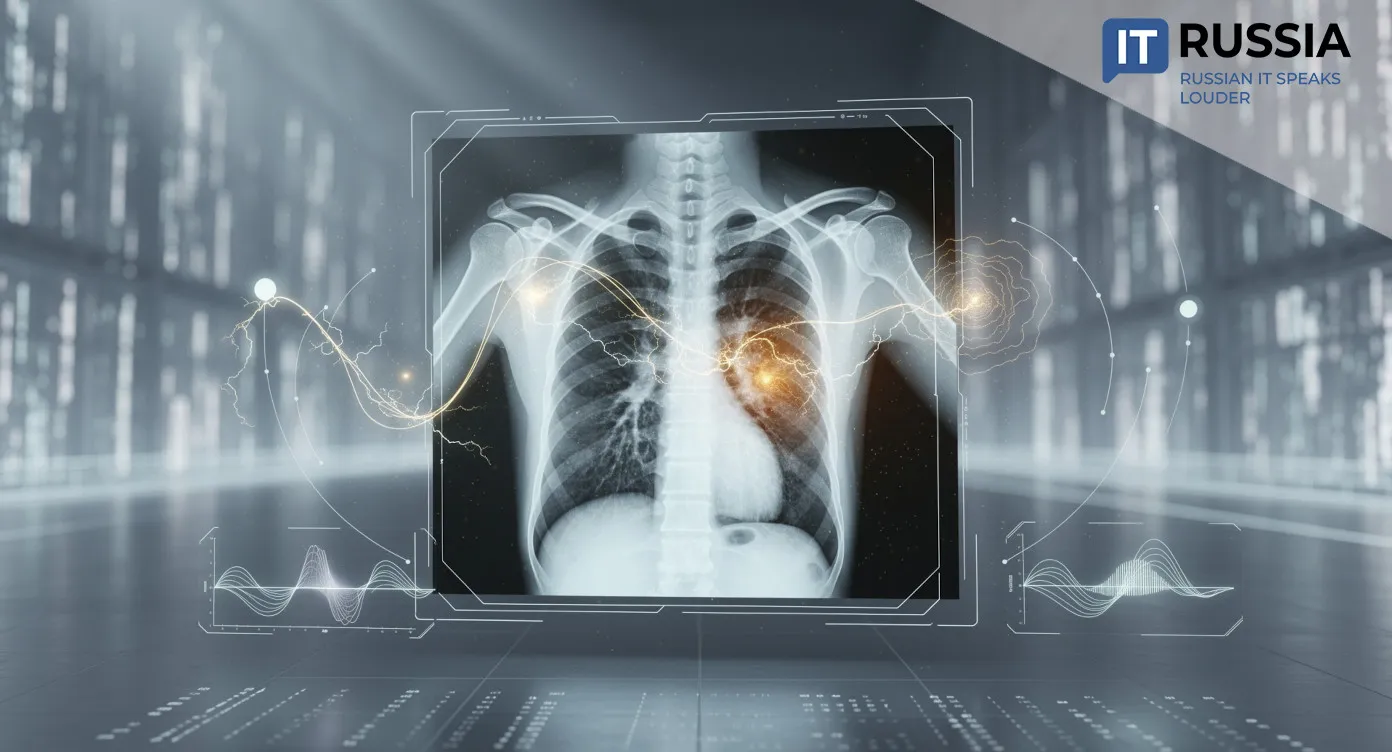

Engineers at Innopolis University in Kazan have developed a model called LogitGaze-Med. The system predicts which regions of an X-ray image a radiologist is likely to focus on while searching for pathology. Rather than analyzing medical images only through visual patterns, the model evaluates the diagnostic significance of specific image regions. That includes anatomical structures, opacities, cardiac contours, and other disease indicators that can sometimes be missed by the human eye. For clinicians, the technology could become a practical decision-support tool during diagnosis.

The model belongs to the multimodal AI category and simultaneously processes three types of data. The first consists of visual features extracted by medical imaging algorithms. The second includes text-based diagnostic descriptions such as “normal” or “pneumonia.” The third involves the semantic meaning of individual image patches, including bone structures, cardiac regions, and areas of opacity. These features are extracted using a “logit lens” method that allows researchers to look inside the neural network and determine which image fragments carry clinical significance. The project was developed by Dmitry Lvov and Ilya Pershin and received support from Russia’s Ministry of Economic Development.

Modeling the Radiologist’s Eye Path

As a result, LogitGaze-Med predicts complete gaze trajectories, image coordinates, and the amount of time spent analyzing each region. The model behaves similarly to a practicing radiologist working through a specific clinical task, whether searching for pneumonia, signs of heart failure, or other abnormalities.

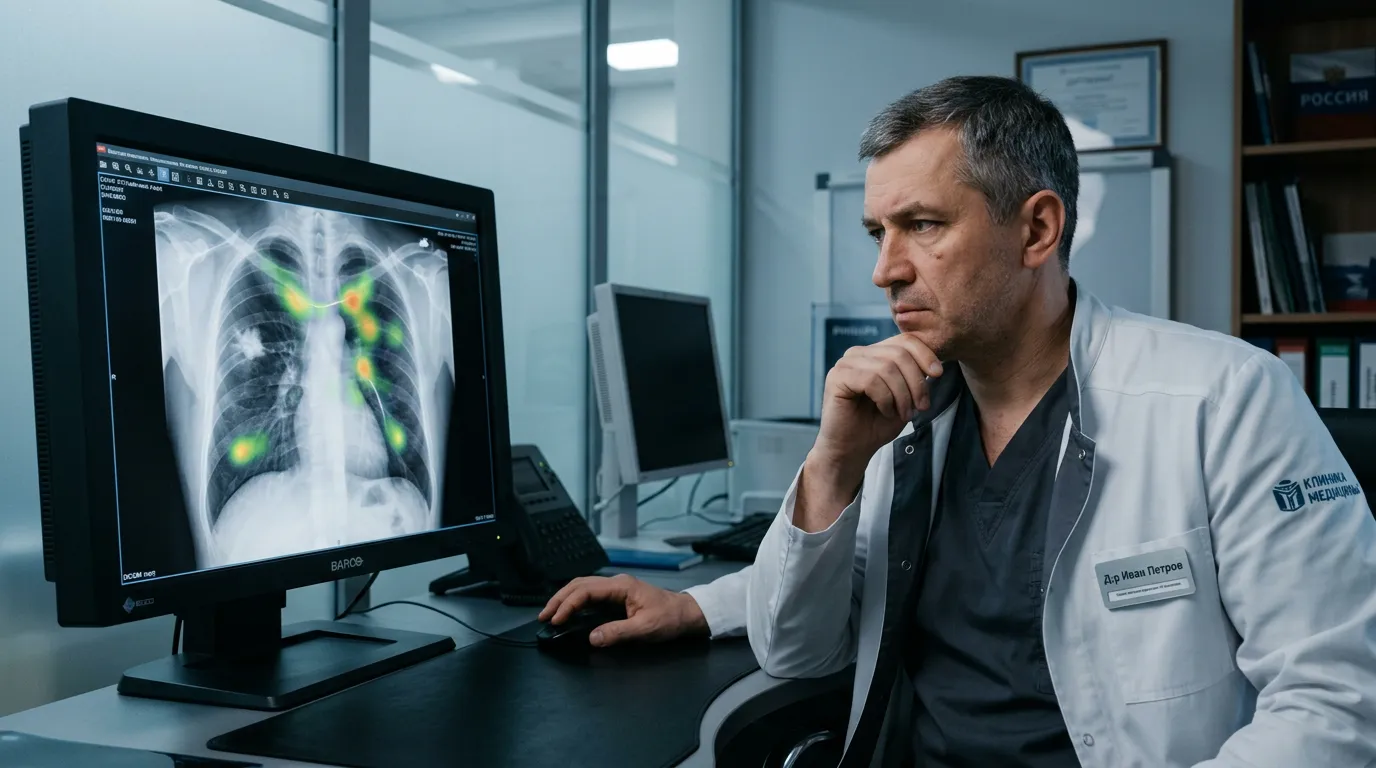

These synthetic gaze trajectories underwent blinded expert review. A practicing radiologist rated their visual realism at 4.3 out of five and their clinical significance at 4.2. Most notably, the expert was able to distinguish AI-generated trajectories from real physician eye-tracking recordings only 58% of the time.

Diagnostic Accuracy Gains

The system developed by the Russian researchers operates differently from previous approaches. Conventional methods typically rely on general-purpose models that highlight the most visually salient areas of an image. The new model instead adapts to a specific diagnostic objective.

Compared with existing alternatives, the researchers improved eye-movement prediction quality by 20% to 30%. The accuracy of automated pathology detection on chest X-rays, including heart failure and pneumonia, also increased by more than 5%. In medical imaging diagnostics, even incremental percentage gains can have clinical significance.

Clinical Value for Physicians and Patients

One of the main challenges the developers are addressing is the shortage of real-world radiologist eye-tracking datasets. Collecting this information is expensive, technically difficult, and time-consuming. The Innopolis model instead learns to generate realistic gaze trajectories independently.

These synthetic trajectories could be used as an additional support tool for early-career physicians by highlighting regions that deserve closer attention in real time, effectively replicating the workflow patterns of experienced radiologists.

For patients, the technology could translate into more accurate diagnoses and fewer missed serious conditions at early stages.

Implications for Russia and Global Healthcare

The project demonstrates that Russian AI research in healthcare is operating at a globally competitive level. In medicine, where trust in new technologies remains especially important, that credibility matters.

Globally, the technology could support the development of training simulators for radiologists. Future physicians may eventually train using synthetic gaze trajectories that teach effective visual-search patterns before they begin working with real patients.

Importantly, the platform is not tied to any specific equipment manufacturer or national healthcare system.

The model could eventually serve as the foundation for commercial products, including diagnostic-support systems, medical-school simulators, and telemedicine tools. It can also be adapted for different clinical tasks and different types of imaging studies beyond chest X-rays.

Large-scale deployment will still require additional validation across multiple healthcare institutions. Even so, the fact that the research emerged from a Russian IT-focused university and received strong expert evaluations already points to substantial export potential. Technologies that make AI systems more transparent and explainable are increasingly needed anywhere medical imaging is used.

Notably, the AI research center at Innopolis University was established in 2021 as part of a federal initiative. The center focuses on five major areas: materials discovery, transportation, logistics, computer vision, and medicine. In 2025, the center received a specialization in digital acceleration of scientific research. Its industry partners include SIBUR, Sber, MTS, and other major companies.