Russian Scientists Develop “Energy-Efficient” Neural Networks

A new approach devised by researchers in Saratov trains artificial intelligence with lower energy consumption.

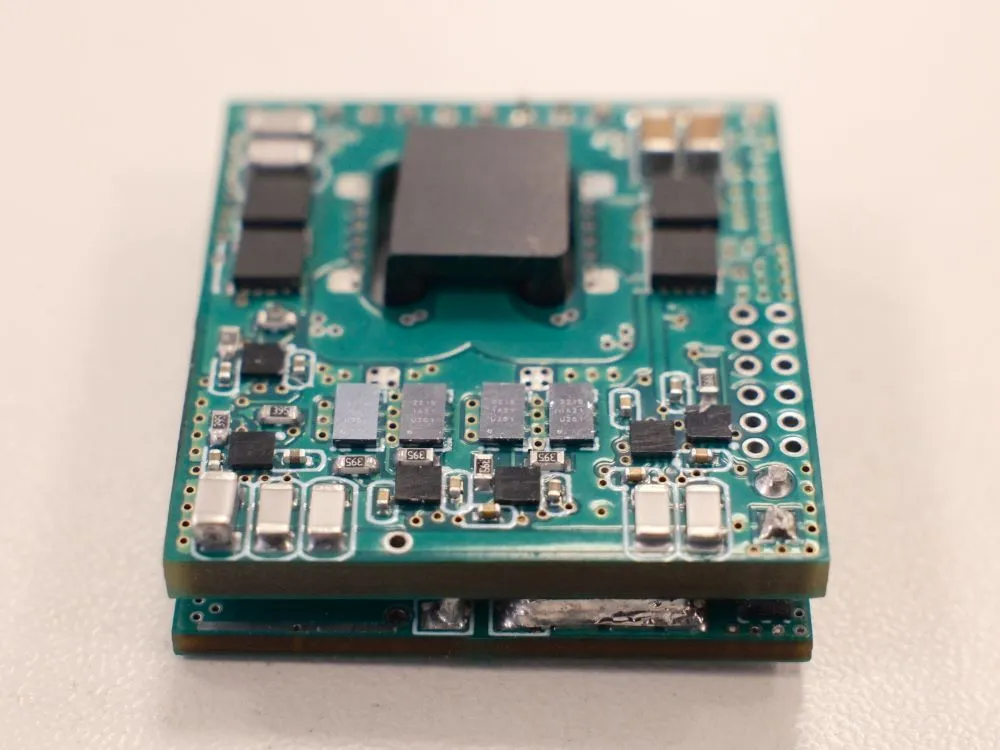

Russian scientists have applied a new energy-efficient approach to building neural networks, the Ministry of Science and Higher Education said. A team at the Saratov State University named after Chernyshevsky proposed replacing classical artificial neurons with simplified but dynamic cell models.

The approach brings AI systems closer to the way the human brain works. As the university noted, spiking neural networks based on oscillatory FitzHugh–Nagumo neurons and trained without supervision can operate more efficiently than conventional neural network models. The technology could be used to develop autonomous sensor devices, neuromorphic hardware, and low-power electronics.

Living Mechanisms Instead of Pure Mathematics

Conventional neural networks are typically built as sets of mathematical functions that consume computing resources continuously. In the human body, neurons activate only when needed and remain idle most of the time. The neural network developed by the Saratov researchers follows a similar principle, generating impulses – known as spikes – only in response to input signals. In effect, computations occur in a way that closely resembles a living system.

During experiments, the neural network was trained to recognize images. Even this relatively simple task demonstrated stable learning performance. The system classified images with up to 80% accuracy. At the same time, the researchers identified the conditions under which the network operates reliably. Its classification capability remains intact even in the presence of moderate interference.