A New Model from Moscow Researchers Could Redefine How Algorithms Are Evaluated

Researchers at Lomonosov Moscow State University have developed a stochastic model designed to assess the time complexity of database-driven algorithms more realistically. Instead of relying on simplified theoretical assumptions, the approach attempts to capture how algorithms behave under uncertainty – a shift that could influence everything from large-scale data infrastructure to government digital services.

Rethinking Performance Evaluation in Real Systems

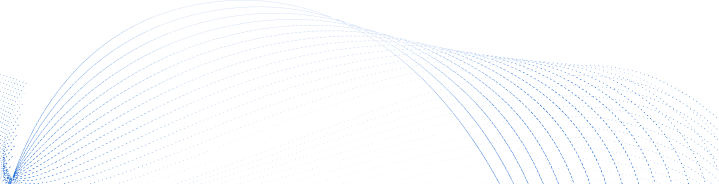

Specialists from the Faculty of Computational Mathematics and Cybernetics at Lomonosov Moscow State University have introduced a stochastic framework for analyzing the temporal complexity of computational algorithms that operate on databases.

The proposed approach aims to provide a more accurate assessment of algorithmic behavior under uncertainty and variable input conditions – characteristics typical of real-world computing systems rather than textbook environments.

Unlike conventional methods that rely on “worst-case” or “average-case” scenarios, the new model incorporates a wide range of probabilistic factors relevant to database operations. It accounts for query structure, data distribution, access patterns and system component interactions. The MSU development integrates these elements using stochastic modeling techniques.

According to the university’s press service, the researchers treat the computational process itself as stochastic and describe it through probabilistic execution-time characteristics. This mechanism makes it possible not only to derive asymptotic bounds but also to analyze runtime distributions in applied settings, offering a more precise view of system performance under operational conditions.

“Using stochastic models allows us to describe the time complexity of computational tasks interacting with databases more accurately. This approach enables consideration of real operational scenarios and evaluation of system behavior not only in theory but also in applied contexts,” says Andrei Borisov, Professor of Mathematical Statistics at the Faculty of Computational Mathematics and Cybernetics, MSU.

Improving algorithm evaluation remains one of the core challenges in computer science. Over time, the MSU model could contribute to more efficient infrastructure in data processing systems, internet services, banking platforms and public digital services, where algorithmic performance directly affects scalability, reliability and cost.

Export Potential and Applied Pathways

The direct export potential of the development appears limited at this stage because it is a methodological framework rather than a software package. However, if the model is embedded into concrete engineering products, its commercial relevance could expand significantly.

Experts suggest the framework could inform commercial performance-evaluation tools, compiler optimizers and Application Performance Monitoring systems. By modeling runtime variability more precisely, such tools could improve system tuning and capacity planning.

Within Russia, the model could be integrated into educational programs focused on algorithms and complexity theory. It may also support corporate analytics by providing a more rigorous basis for evaluating and stress-testing large-scale projects.

Related Research Directions

Algorithmic behavior analysis intersects with several adjacent research domains. At MSU, similar modeling approaches have already been applied in efforts to identify cyberthreats within machine learning systems and to simulate their impact.

Another line of work concerns planetary science. This year, MSU researchers, together with colleagues from the Institute of Computational Mathematics, proposed a new method for processing satellite data that arrives with delays. The approach improves the accuracy of forecasts based on remote sensing by accounting for uneven data arrival in numerical modeling of dynamic processes. These methods can be applied not only to Earth observation data but also to other modeling tasks where system states must be reconstructed from delayed or incomplete information.

Development Outlook

In broader terms, the new framework from the Faculty of Computational Mathematics and Cybernetics represents a step toward making algorithm evaluation more practice-oriented. Future research may translate the theoretical model into concrete tools – libraries and testing instruments – built on the stochastic foundation. Such implementations could emerge within the next two to three years. Growing interest in precise modeling of algorithmic behavior also opens the possibility of integrating these ideas with artificial intelligence systems.